Today I need help. 🙂

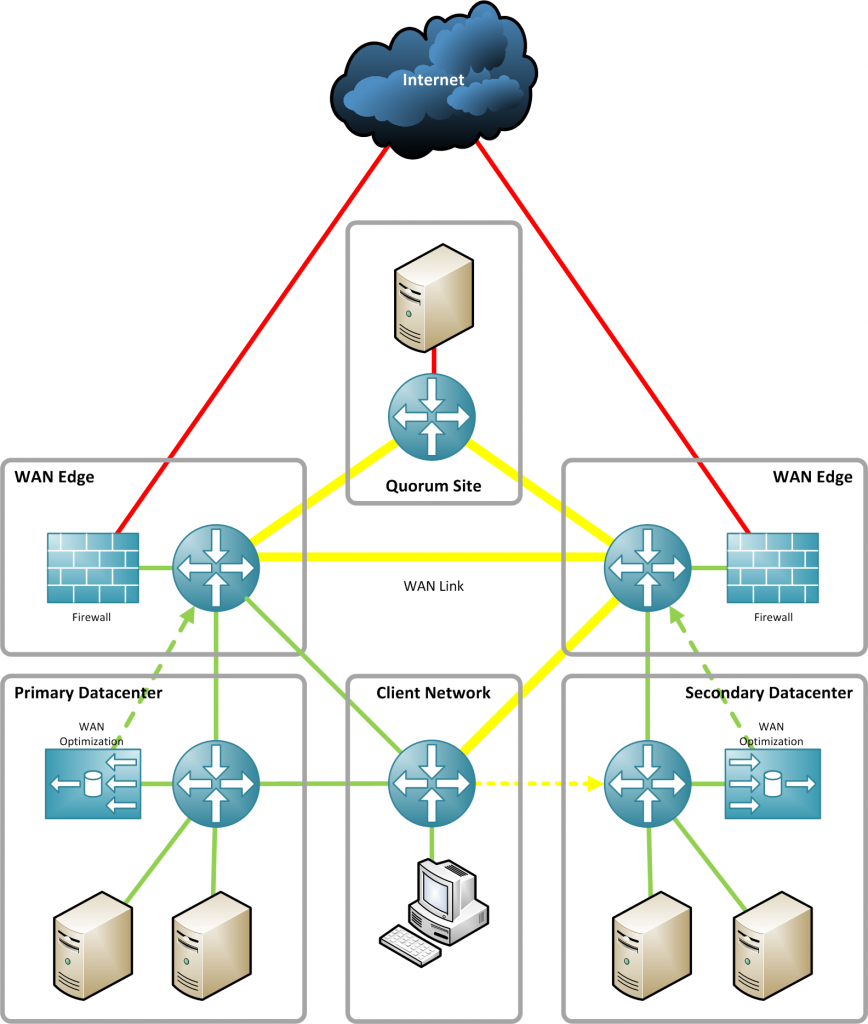

Currently I’m planing a Multi-Site Datacenter at home. 😉 I want to test some new technologies like Cisco VXLAN, Site-to-Site replication, VMotion over WAN, and and and. But I’m not a professionell networking guy and that’s why I’m not sure if my following network design is comparable with a real-world datacenter network. I don’t need any redundant component and network designs like a 2 or 3-tier network architecture (Core-Distribution-Access). My lab should be simple, but not too simple. 😉 Feel free to comment…

Hi Tschokko!

Will this be for production or testing?

Something like this is on my whishlist for a few months now.

I’ve no hands on experience with cisco’s vxlan, so i can’t really help there.

what kind of input do you expect? Since there are many way’s to go from here.

Regards,

Mario

You will need latency, packet drops and artificial limits on bandwidth … google for „netem linux“ or „linux tc“/“linux hfsc“/“linux htb“/“linux imq“ (it gives possibility to build Linux bridge that is able to shape/“damage“ traffic… i’ve used something similar to simulate 2 DCs e.g. here http://www.oracle.com/technetwork/articles/wartak-rac-vm-099826.html). You are probably VMware-guy (i just prefer XEN), perhaps it would be possible to use netem/hfsc/htb

a) inside VMware if it has any sort of userland close to hypervisor networking (just like XEN dom0)

b) route/bridge through a new „egress“-point-like Linux VM just doing briding/routing

Good luck with your project 😉

My idea is to place a Linux based bridge between the links that are connecting booth simulated datacenters. I found some weeks ago I nice tutorial how to implement such a bridge and control bandwidth and latency.

Currently I have to wait until I have enough money to get some hardware for this project.

@Mario

This is only for testing! I expect to get some experience with streched cluster configuratoons and the problems that appears with such configurations.

@Jakub

You’re link is dead. 🙁

Well, just drop the last „)“ from the URL. http://www.oracle.com/technetwork/articles/virtualization/wartak-rac-vm-099826.html They keep changing the styles and formating although you should get the idea/concept. The driving script for /sbin/tc binary can be fetched from here http://jakub.wartak.pl/db/oracle/qosracinit/ (however my idea was to manage it it from the XEN hypervisor dom0 side not additional VMs as bridges – but it will work too). Linux traffic shaping is cool, but mostly not very popular, google IMQ, HFSC, RED (lots of fun especially fun with TCP), ESFQ etc. If you want to go low then go with multiple VMs with low RAM(256-384MB) all running e.g. Quagga with eBGP and iBGP, plus perhaps two „virtual ISPs“ (simulated just by another set of VMs with Quaga)… you could even deploy 2x VM like this per data center and have some fun with VRRP… of course everything needs to be done by yourself, but you could stick to some ready-to-use VM appliances, not sure if Vyatta supports images.

Hallo,

Jakub’s link works fine, just remove the right parenthesis. 🙂